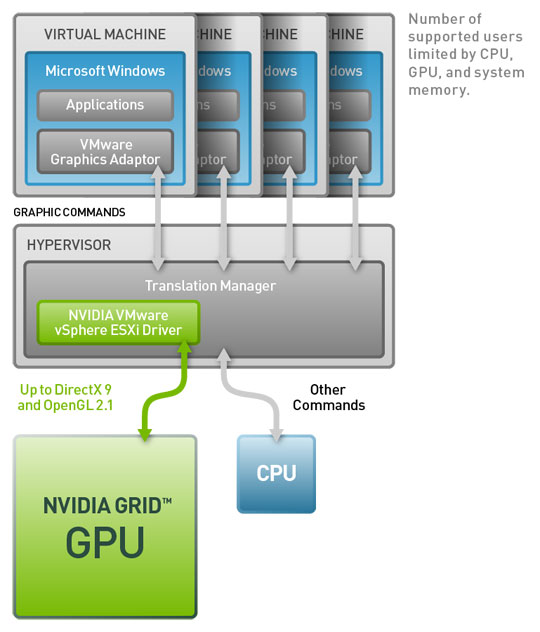

In Part 2, we installed the VIB and configured ESXi to accept the graphics card. Now we need to build a pool of desktops to utilize vSGA and see the results compared to a non vSGA VM. Here’s a detail breakdown of the Pool Settings, I will walk through each step and notate some things I’ve learned about pool creation with regards to vSGA along the way.

- Pool Type: Automatic

- User Assignment: Floating

- vCenter Server: View Composer Linked Clones

- Pool ID: vSGA_Test_Pool

- Pool Display Name: vSGA Workstations

- Pool Description: Pool of Desktops evaluating vSGA Graphics Virtualization

- General State: Enabled

- Connection Server Restrictions: None

- Remote Desktop Power Settings: Take No Power Action

- Automatically Logoff After Disconnect: Never

- Allow Users to Reset Their Desktops: Yes

- Allow Multiple Sessions per User: No

- Delete or Refresh Desktop Upon Logoff: Refresh Immediately

- Default Display Protocol: PCoIP

- Allow User to Choose Protocol: No

- 3D Renderer: Automatic (512MB)

- Max Number of Monitors: 2

- HTML Access: Enabled

- Adobe Flash Settings: Default

- Provisioning – Basic Settings: Both Enabled

- Virtual Machine Naming – Use a Naming Pattern: vSGATest{n:fixed=2}

- Max Number of Desktops: 8

- Number of Spare Desktops: 1

- Minimum Number of Provisioned Desktops during Maintenance: 0

- Provision Timing: Provision All Desktops Up Front

- Disposable File Redirection: 20480 MB

- Replica Disks: Replica and OS will remain together

- Parent VM: TestGold

- Snapshot: vSGA Prod SS

- VM Folder Location: Workstations

- Cluster: vSGA Cluster

- Resource Pool: vSGA Cluster

- Datastores: SynologySSD

- Use View Storage Accelerator: OS Disks – 7 Days

- Reclaim Disk Space: 1 GB

- Blackout Times: 8-17:00 MTWTHF

- Domain: View Service Account

- AD Container: AD Path

- Use QuickPrep: No Options

- Entitle Users after Wizard Finishes: Yes

From those Pool Settings, there are a few things I want to point out. You must force all sessions to use PCoIP so the Automatic, Hardware and Software options are available. After all the secret sauce to Horizon View is PCoIP!

I set vSGA pools to “Automatic” so if I have other desktops on this cluster of hosts, they aren’t fighting for resources if they aren’t needed. I can relinquish GPU resources for other desktop workloads. The Gold Images we use contain some beefy applications (AutoCAD, Revit, Navisworks, etc) so we like our disposable disks large to handle central model caching and other cached loads to move outside of the persistent disk.

It seems silly but I like when my users can reset their own machine, the delay in help desk resolution depending on the current workload could be minutes, no need to have my users wait on us! For this test I am going to push it to 11 (512MB is overkill for most task workers but our CAD guys have enjoyed the higher GPUs).

Lastly, View Storage Accelerator, is a big help in reclaiming disk space 1GB at a time, I have set the blackout window for normal business hours to protect the SAN from unwanted IOPS spikes. You should see vCenter Notifications at 5:01….it looks like a stock ticker tape!

Now that we have built our Pool, we can entitle our group or users and let them log in and start playing with vSGA enabled virtual desktops.

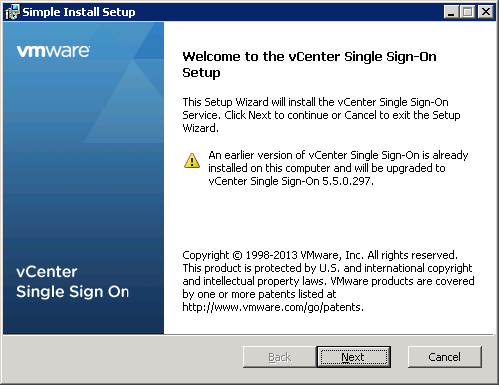

Our test group of users were impressed with the fluid motion of Google Earth, AutoCAD, Revit and Navisworks. Is it amazing? Yes on the ability to provision multiple workloads to a single GPU and No because it doesn’t get us to that 100% physical experience just yet, is it a step in the right direction for fully virtualizing GPUs? Absolutely! I hope this small 3 part series has been informative, I will be back soon with a 3 part session for vDGA for Horizon View 5.3 but it would be nice to have a walkthrough on how to upgrade to Horizon View 5.3 first…..up next!

You must be logged in to post a comment.