Well some time has certainly passed since writing about vSGA & vDGA! I think everyone knew that the technology would finally come where we could dynamically allocate graphics hardware to our virtual machines, beyond direct mapping. VMware and NVIDIA announced vGPU several months ago, to be honest Citrix has had this technology for a few years (thanks exclusivity contracts), and it has been making some major waves in the AEC and OGE industries. Since my day job focuses mostly on VMware and their product offering, I felt it was appropriate to write a few articles about vGPU and how it is configured with VDI.

vSGA and vDGA

Let’s run through a quick overview on Shared Graphics and Direct Graphics allocation, as it will help to understand how vGPU is so groundbreaking of a graphics technology for virtualization.

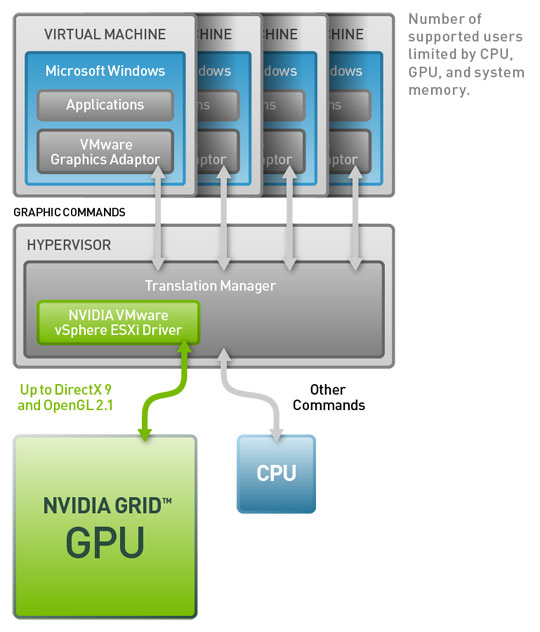

vSGA gives you the ability to provision multiple VM’s (Linked-Clones or Full VM’s) to single or multiple GPU’s. Graphics cards are presented to the VM as a software video driver and the graphics processes are handled by an ESXi driver (VIB). Graphics resources are reserved on a first come first serve basis so sizing and capacity is important to consider.

vDGA differs from vSGA in that the Physical GPU is assigned to the VM using DirectPath I/O so the full GPU is assigned to a specific machine. vSGA allows multiple VM’s to provision resources from the GPU, with vDGA you install the full NVIDIA GPU Driver Package to the VM and the Graphics Card shows up as hardware in Device Manager. In Horizon View 5.3.X and 6.X it is now out of Technical Preview and fully supported. vDGA has increased its support for Graphics Boards including the GRID K1, K2 & newly announced GRID 2.0 graphics boards.

What is vGPU?

With vSGA we had shared allocation from a single/multiple graphics board for multiple virtual machines and vDGA was direct mapping of a physical graphics board, now let’s talk about vGPU. vGPU is a technology that has multiple layers, a ESXi VIB Driver that acts a GPU Manager for ESXi, Certified NVIDIA vGPU Profiles for virtual machines, and NVIDIA GRID Software Driver packages for the Windows Operating System inside the virtual machine. Each layer serves a specific function that will create a valid and certified driver experience for several Windows applications like AutoCAD, Revit, SolidWorks, and other 3D rendering applications. So let’s dive into the technology that gives us the rich experience for VDI.

As opposed to direct allocation in vDGA, vGPU allows each desktop to be assigned a specific amount of Graphics RAM based on a certified vGPU profile, those profiles can be of various sizes based on common use cases. The benefit of this profile based graphics allocation is that there is no translation of graphics like in vSGA, the GPU are sent directly to the graphics board using the NVIDIA vGPU manager inside the hypervisor. It is similar to the technology that is used with physical network adapters, but obviously carving up graphics RAM is different than carving up IP traffic. The amount of RAM ranges from 512MB – 4GB depending on the graphics board(s) installed in your servers. I have listed the profiles for GRID 1.0 below, I will talk about GRID 2.0 profiles later on (yup it is different).

As you can see there is a ton of potential profiles that you can assign to users, you need to take careful consideration into these profiles as the max number of users per board will add up. I have worked with several customers establishing baselines from existing physical workstations to determine the proper vGPU profile to use.

NVIDIA vGPU with GRID 2.0

NVIDIA announced at VMworld 2015 the release of their new line of GRID graphics boards (PCIe and MXM) called…..you guessed it GRID 2.0. With GRID 1.0 we had 2 board options K1 & K2 for PCIe but GRID 2.0 has 2 graphics board, the Tesla M60 for PCIe lanes and Tesla M6 for MXM blade servers (vendor specific). The Tesla M60 on paper compares more to the K2 card with the density of the K1. The new Tesla M60 has the ability to server up to 32 vGPU users on a single card but there is a catch to all that awesomeness…..licensing.

Wait what? NVIDIA is in the licensing business, unfortunately yes they are. The old GRID 1.0 model of choosing the various vGPU profiles depending on the card you have purchased is long gone. With GRID 2.0 you license based on the use case that you want to deliver. I have a picture of the 3 use case options with the associated vGPU profiles they offer below.

So let’s talk about licensing now, NVIDIA will allocate certain vGPU profiles based on licensing one of three NVIDIA GRID experiences: Virtual PC, Virtual Workstation and Virtual Workstation Extended. Each experience can be licensed with a specific number of users connected to various profiles at that level. With GRID 1.0 you had three aspects to implementing vGPU: the NVIDIA VIB driver, assign a vGPU Profile to a virtual machine, and installing the NVIDIA vGPU OS Driver package. Now with GRID 2.0 there are two more items to that list, the Licensing Manager and GPU Mode Change Utility. The licensing manager can be installed using Windows or Linux instance, this is where you will apply your purchased license file from NVIDIA’s choice of distributors. The GPU Mode Change Utility is used to change the mode of the Tesla M60/M6 from compute mode to graphics mode as the boards ship with graphics mode being restricted, this will allow you to provision vGPU profiles to virtual machines. Keep in mind that licensing the Linux OS is only available on the two higher experiences. I will go through setting up GRID 2.0 profiles in Part 3 of this vGPU blog series as there are some tweaks that have to happen to activate the licensing to get a vGPU profile.

Next up is Part 2 of the vGPU Series – GRID Installation & Configuration.

You must be logged in to post a comment.